You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Building my new PC

- Thread starter SiliconAngel

- Start date

SiliconAngel

1 AYC Bar

- Thread starter

- #22

Expect a resurgence next year with the new chip revision (Gulftown).Over here there has been a great reduction in X58 motherboards available but there is still a variety available. I guess there is little reason for the X58's now though unless you want SLI or do really need the extra ram capacity.

As I said, the key benefit of this one is the six built-in hot-swap bays. I'm sure I'll replace them with 5-in-3 bays down the track, but this whole case was cheaper than the 5-in-3 hotswap bay on it's own!If I didn't have an i7 975 system and was building a system right now, I'd have the same motherboard, cpu and the same ram! I'd get another Silverstone case though, probably a TJ-10 to match my TJ-03 and I still maintain that their TJ-07 case is the most amazing piece of case design ever

Me too, when I bought this card it far outstripped everything in it's class. I think 3-ware have vastly improved the RAID5 and 6 performance in their latest series of cards, but I haven't seen a direct performance comparison (and I'm not prepared to buy one just to test itI'm a big fan of Areca raid cards

Good advice. Haven't had to split anything though, the PSU has more than enough cable to run separate lines to the bays, and I'll be upgrading my UPS in the new year just in case. I'm pretty anal about backups, so if the array fails it will just be a bit of screwing around rebuilding it (ok, for a couple of days...). Yes, Zalman could have designed these better, but I don't know of anyone else making a case with hot-swap bays built in (especially for such a low price!), so hopefully this is the start of a new trend in case design!One piece of advice I would give you for both the Raid 5 and Raid 0 array is to be EXTREMELY careful with running power splitters. Your SNT backplane is fine because all of the bays have crosslinked power from the inputs. However the Zalman bays aren't, you run an individual power cable to each set of 3 bays. That is fine, but if one bay very briefly loses power your array will be gone! I know you're smart, but just be careful, I've only been bitten by this once and it sucked arse!

Actually I never just buy servers either, I always custom design 'cause you can save around 30-40% on exactly the same specced hardware not buying off-the-shelf. As Brad said, servers are designed for fairly specific purposes, so if you want to do workstation & PC tasks you're already out of server territory. I DID consider a dual-xeon workstation base, which would be fantastic for computationally heavy work like video editing, but if I ever decide to play a game on it (last game I played was HL2 three or four years ago though) it would actually be slower. Let's not forget the additional grand or two it would have cost...Good lord man, buy a bloody server!

Sorry, I know your comment was flippant, but hopefully taking the time to explain will help others out there who have questions like this and have never known the answer

Funnily enough I hardly ever get called a nerd - I think I put myself into that category more than anyone else. In fact, usually when I say something like that about myself, most people scoff and say 'Trev, there's nothing nerdy about you mate!' *shrugs*..... & I have to tolerate being called a nerd ?

Haha yea everyone's going the virtualisation route, the cost savings can be really tremendous for large organisations. Just need to make sure you have the budget for the right equipment from the outset though - RAID 5 or 6 has to be done on SAS drives to avoid a crippling performance penalty. Or if you're on a really tight budget, you do what I did on a recent build - run the OS and VMs off a good SSD, store data on the RAID array and do regular drive images of the SSD in case of failure. Not Enterprise class by any means, but it CAN give you a lightning fast server at a third the price, as long as the client is fully aware of the potential downfalls for the resultant saving. Sure, they'll still blame you if their server fails at some point, but you just keep reminding them how much you saved them when their budget was tight - a cheap server that does the job is better than no server, or a cheap server that has more redundancy but can't handle the load. Remember: cheap, reliable and fast, you can only pick twoI'm lucky. I can get away with using a 5 year old small form HP package jobbie hehe. It browses the web.... and.... uh..... thats it

Just bought some pretty meaty servers for work though. Damn virtualisation

bradc

1 AYC Bar

Heh Trev, Gulftown isn't coming next year, one of my best friends has one at 4.2ghz on water  His exact quote:

His exact quote:

Now to be fair, this is the same guy I get my cpu's from So far I've had 2x P4 EE's, a PD EE, 2x C2D EE's, 2x C2Q EE's and the current i7 EE

So far I've had 2x P4 EE's, a PD EE, 2x C2D EE's, 2x C2Q EE's and the current i7 EE

On the hot swap bay option, now while I'll admit that to change HDD's in my Lian Li EX-23A racks will take a good 20 minutes, how often are you really going to be doing it, and do you want to encourage people to hot swap drives out of your raid 0 array? I can see it now.... "ooohh what does this do" *pull*. Trev: "ohh shit!"

Well, I've clocked to 4.15GHz easily. It actually runs a tad cooler then my 975 at 4GHz. All that is due to the wonderful 32nm process.

I was able to score 33K+ score in Cinebench10!!! Wow! The best I could do with my 4.8GHz Vapochilled 975 was ~26K.

I'm keeping my Bclk at 166MHz so I can keep my Dominator GT memory at 2000MHz.

...again my H50 seems to be doing quite well for the $70 pricetag!

Now to be fair, this is the same guy I get my cpu's from

On the hot swap bay option, now while I'll admit that to change HDD's in my Lian Li EX-23A racks will take a good 20 minutes, how often are you really going to be doing it, and do you want to encourage people to hot swap drives out of your raid 0 array? I can see it now.... "ooohh what does this do" *pull*. Trev: "ohh shit!"

SiliconAngel

1 AYC Bar

- Thread starter

- #24

Just 'cause some people have engineering samples or even early release silicon doesn't mean anything about final commercial release - the number of people out there playing with early Gulftowns isn't going to affect sales of diddly squat, it's demand from the masses that will affect stocked product levels. And yes I know you were just being a smartassHeh Trev, Gulftown isn't coming next year, one of my best friends has one at 4.2ghz on water

Nah, the number of people who ever see the inside of my office I can count on one hand, and they know enough to not go near my gear. My girl quivers with fear at the thought of touching my keyboard, let alone the actual boxOn the hot swap bay option, now while I'll admit that to change HDD's in my Lian Li EX-23A racks will take a good 20 minutes, how often are you really going to be doing it, and do you want to encourage people to hot swap drives out of your raid 0 array? I can see it now.... "ooohh what does this do" *pull*. Trev: "ohh shit!"

Oh I found out yesterday the 3-in-2 won't be available until the 21st of Jan now, nor will the 2.5-3.5" bay adapter for the SSD, and I STILL haven't heard from Thermalright about the new 1156 mounting bracket for the IFX-14, but that's ok 'cause I can still get everything configured and installed as all the RAM and drives are now in. I just won't be able to finish assembly so it will live on my desk in pieces for another month or so *sigh*

bradc

1 AYC Bar

The racks are available here.

http://www.procase.co.nz/backplane_modules/snt_2T3SAS.html

It is the SAS version but I could have one to you this side of xmas

http://www.procase.co.nz/backplane_modules/snt_2T3SAS.html

It is the SAS version but I could have one to you this side of xmas

SiliconAngel

1 AYC Bar

- Thread starter

- #26

Mate PM me price and I'll happily take a look  Thanks!

Thanks!

bradc

1 AYC Bar

I will try to remember on monday!

SiliconAngel

1 AYC Bar

- Thread starter

- #28

I've been gradually doing some benchmarking and testing of this when I get an opportunity, thought I'd share some results. First, the disk performance on this thing is insane...

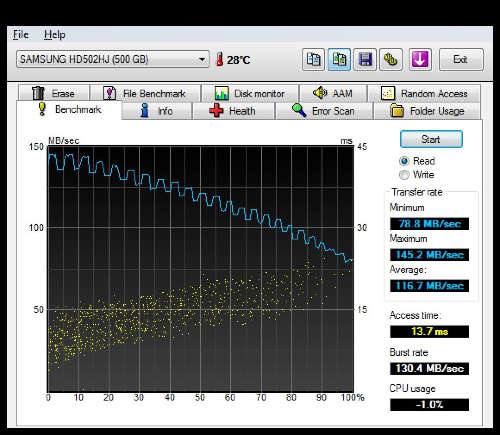

Here's a HDTunePro sequential read test on a Samsung F3 500GB drive, one of the fastest SATA consumer drives currently available:

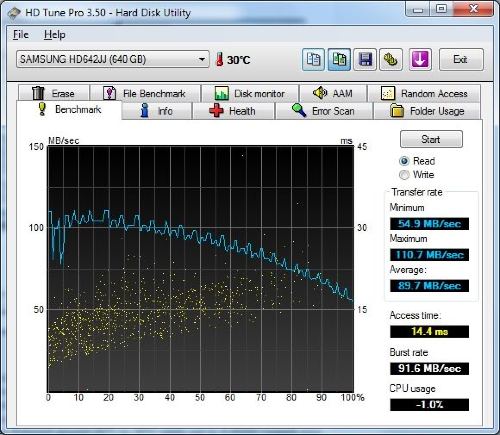

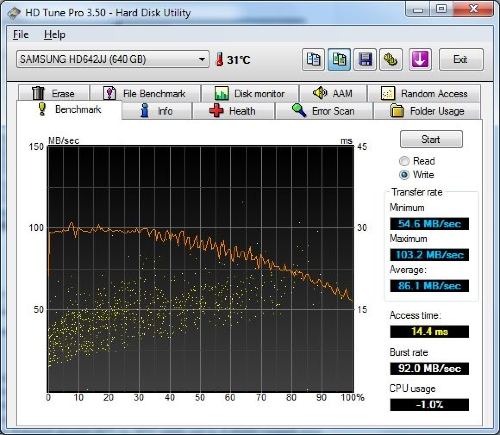

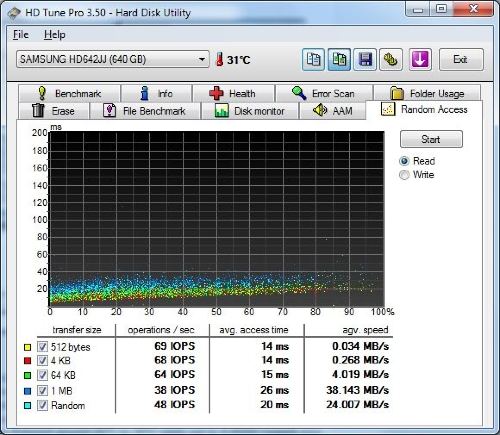

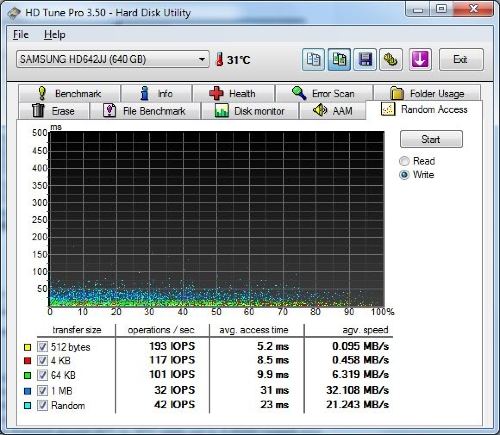

But I realised that's not exactly indicative of what most people have, so here are some benchmarks of an older Samsung Spinpoint F1 640GB drive, one of the first perpendicular recording drives with two 320GB platters, these were a top class drive a couple of years ago and still not a bad drive today (although they have huge compatibility issues in RAID configurations).

Sequential Read Benchmark:

Sequential Write Benchmark:

Random Access Reads:

Random Access Writes:

The above tests are baseline, to give you an idea how average mechanical drives perform. Now we get to the fun stuff - here's the sequential read tests for my drives:

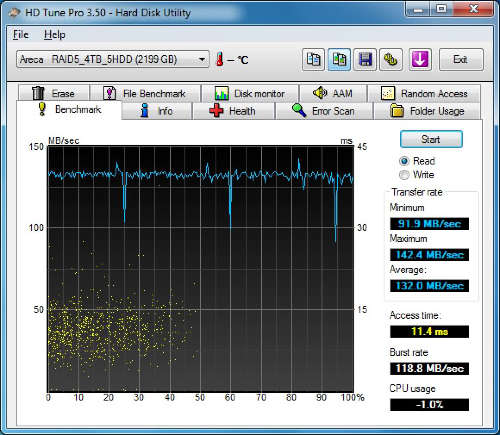

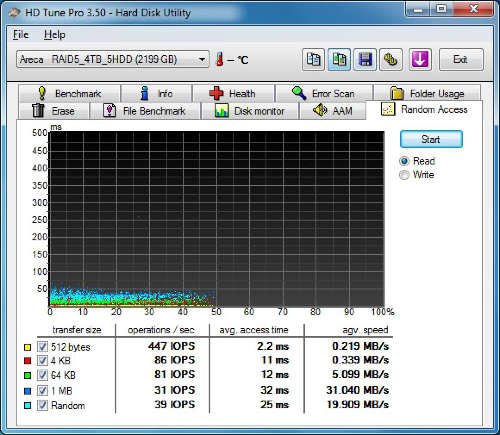

RAID5 5 disk array with 5x Samsung Spinpoint F3 1TB drives:

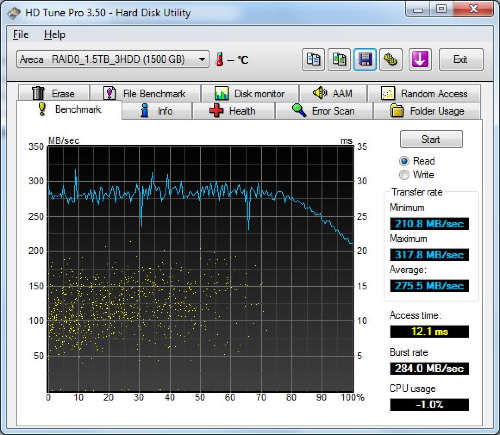

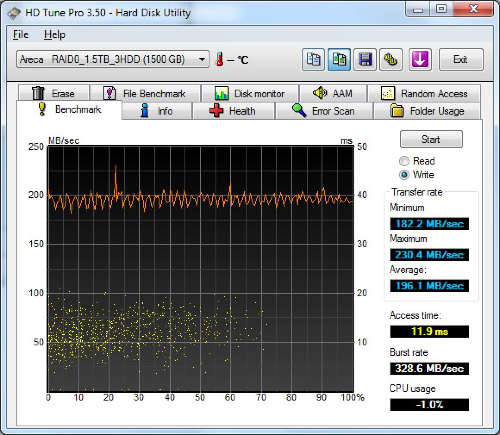

RAID0 on three Samsung F3 500GB drives:

Whoa! Those are some impressive numbers!

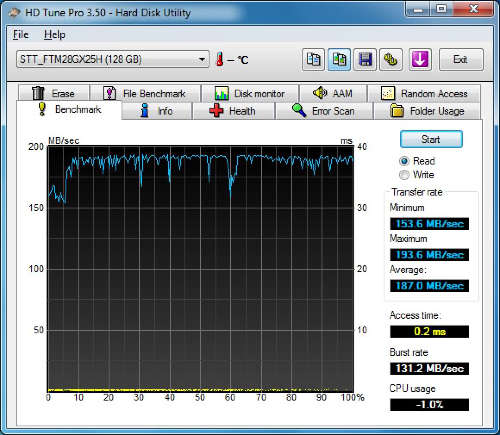

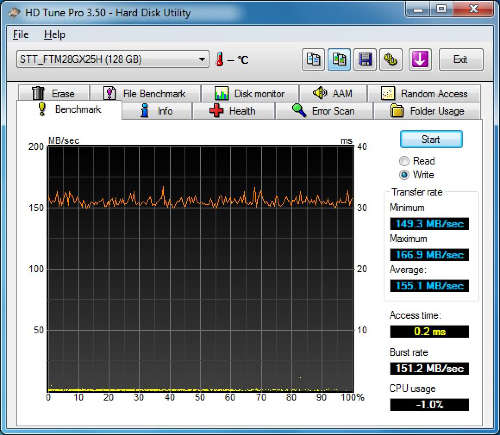

Here's the SuperTalent UltraDrive ME in action:

That ridiculously low seek (access) time is where this drive really shines, but the bandwidth figures are still pretty darn impressive. Imagine what you'd get with three of THESE puppies in RAID0!!

I only have write tests for the RAID0 and SSD, not the RAID5 array, because you have to have blank drives to run the write tests and I already had too much data on the RAID5 array. So here's the sequential write test for the RAID0 disks:

And for the SSD:

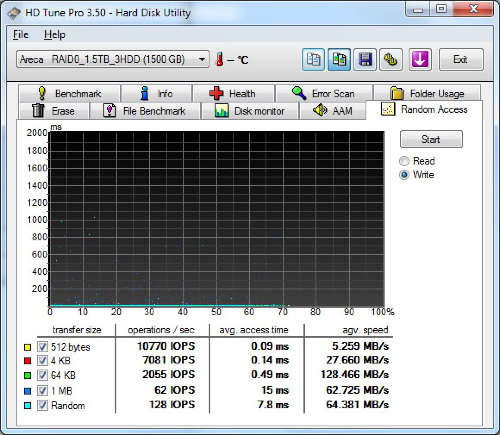

Random read tests, firstly for the RAID5 disks:

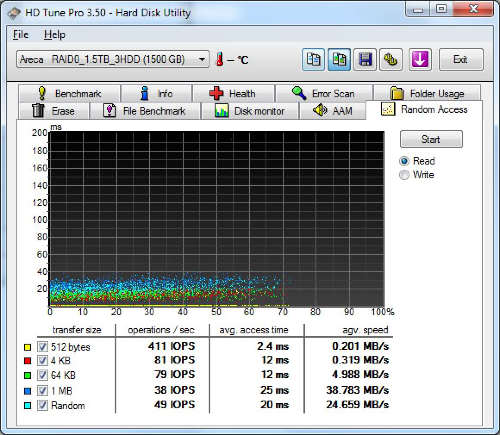

RAID0:

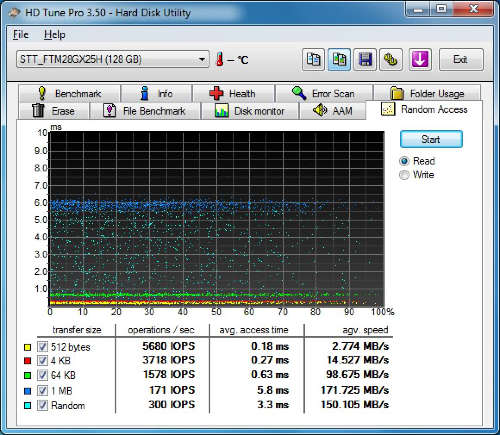

And the SSD:

Random write for the RAID0:

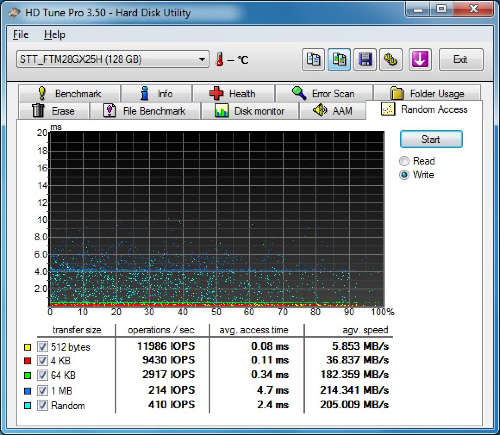

And for the SSD:

You can really see the amazing performance potential of SSDs in random access tasks, they are just so much faster than traditional HDDs it's insane.

Here's a HDTunePro sequential read test on a Samsung F3 500GB drive, one of the fastest SATA consumer drives currently available:

But I realised that's not exactly indicative of what most people have, so here are some benchmarks of an older Samsung Spinpoint F1 640GB drive, one of the first perpendicular recording drives with two 320GB platters, these were a top class drive a couple of years ago and still not a bad drive today (although they have huge compatibility issues in RAID configurations).

Sequential Read Benchmark:

Sequential Write Benchmark:

Random Access Reads:

Random Access Writes:

The above tests are baseline, to give you an idea how average mechanical drives perform. Now we get to the fun stuff - here's the sequential read tests for my drives:

RAID5 5 disk array with 5x Samsung Spinpoint F3 1TB drives:

RAID0 on three Samsung F3 500GB drives:

Whoa! Those are some impressive numbers!

Here's the SuperTalent UltraDrive ME in action:

That ridiculously low seek (access) time is where this drive really shines, but the bandwidth figures are still pretty darn impressive. Imagine what you'd get with three of THESE puppies in RAID0!!

I only have write tests for the RAID0 and SSD, not the RAID5 array, because you have to have blank drives to run the write tests and I already had too much data on the RAID5 array. So here's the sequential write test for the RAID0 disks:

And for the SSD:

Random read tests, firstly for the RAID5 disks:

RAID0:

And the SSD:

Random write for the RAID0:

And for the SSD:

You can really see the amazing performance potential of SSDs in random access tasks, they are just so much faster than traditional HDDs it's insane.

SiliconAngel

1 AYC Bar

- Thread starter

- #29

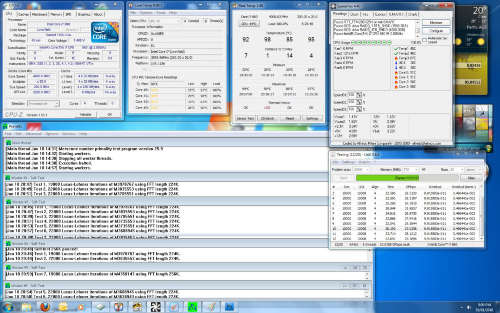

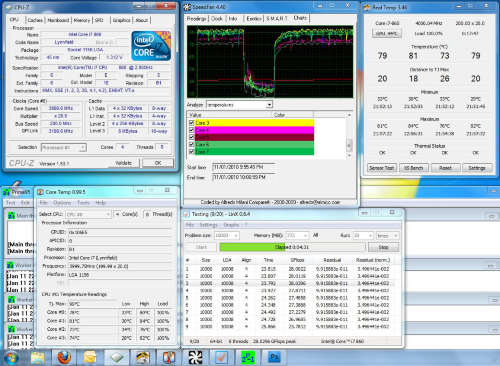

Finally, here's the Core i7 860 @ 4GHz, I ran Prime95-64 all day (a little over 10 hours) and the PC was 100% stable (I was even able to open up Photoshop64 and process screen shots without turning Prime95 off and it was still snappy and responsive!), so while temps are a little on the high side I have no concerns about stability (especially considering it won't hit temps that high very often in normal use).

bradc

1 AYC Bar

For your first Raid 5 test there, you should click on the settings icon up near the top right with the two yellow gears and change the size to a larger amount. I've found that on arrays with more than 4 drives the performance doesn't scale as it should.

Also note that your access times are very very good. This is because HD Tune only looks at the access time over the first 1TB (or 1TiB, I can't remember) of a disc. If you look at your graphs it stops at the 1TB mark!

Your overclock is good considering how hot the cpu is. I'm surprised it is that hot! My i7 975 running at 4ghz gets up to about 77C with a Coolermaster V8 on it. I was doing it at default voltage though, CPUZ reports 1.408v there, is that correct?

Also note that your access times are very very good. This is because HD Tune only looks at the access time over the first 1TB (or 1TiB, I can't remember) of a disc. If you look at your graphs it stops at the 1TB mark!

Your overclock is good considering how hot the cpu is. I'm surprised it is that hot! My i7 975 running at 4ghz gets up to about 77C with a Coolermaster V8 on it. I was doing it at default voltage though, CPUZ reports 1.408v there, is that correct?

from what i can see you are running Linx and prime at teh same time. That is an insane stress test. Although i am a firm believer that Linx is overkill (99% of the time) when testing stability of a cpu. What are you normal temps? and what are your temps when just running prime?

SiliconAngel

1 AYC Bar

- Thread starter

- #32

Brad I'll re-run HDTune with some updated settings when I get some time. CPU voltage is set to 1.3625V, but because I have the Gigabyte dynamic throttling enabled to allow it to reduce power consumption at idle, it also appears to be increasing the CPU voltage quite dramatically. The upside is with the lapped IFX-14 it seems to handle the stress quite well (ie it's 100% stable), but that voltage and the resulting temps are a little concerning. When I get a chance I'll turn off all the dynamic throttling stuff and see how low I can get the CPU and QPI voltage while keeping it stable, but I don't actually want it running at 4GHz all the time - when it idles at 1.8GHz the power consumption drops to 100W, and it goes even lower if it's been sitting around for 20mins and the RAID drives all power down. At full throttle it burns up over 400W, and that's with the graphics card still sitting idle (haven't run any graphics benchmarks yet).For your first Raid 5 test there, you should click on the settings icon up near the top right with the two yellow gears and change the size to a larger amount. I've found that on arrays with more than 4 drives the performance doesn't scale as it should.

Also note that your access times are very very good. This is because HD Tune only looks at the access time over the first 1TB (or 1TiB, I can't remember) of a disc. If you look at your graphs it stops at the 1TB mark!

Your overclock is good considering how hot the cpu is. I'm surprised it is that hot! My i7 975 running at 4ghz gets up to about 77C with a Coolermaster V8 on it. I was doing it at default voltage though, CPUZ reports 1.408v there, is that correct?

Normal temps are around 5 to 7°C higher than ambient. For example, right now the thermal probe inside the case in the direct path of outside air being pumped in by a 120mm fan shows temps of 29°C, while the probe on the HSF core shows 32°C and RealTemp etc show between 32 and 36°C reported by the cores.from what i can see you are running Linx and prime at teh same time. That is an insane stress test. Although i am a firm believer that Linx is overkill (99% of the time) when testing stability of a cpu. What are you normal temps? and what are your temps when just running prime?

Yes, at that time I was running Linx and Prime95-64 concurrently, but Prime95 on it's own was only a degree or two lower than when I had Linx running as well.

I'm certain those insane temps are just due to the overly high voltage, if I force it to lock in something lower I have no doubt the temps will drop radically. It's just a matter of finding the time to do some tweaking now, but at this stage I'm at least happy that the system is capable of that clock rate when I need it without sacrificing stability.

SiliconAngel

1 AYC Bar

- Thread starter

- #33